A tiny inertial transformer for human activity recognition via multimodal knowledge distillation and explainable AI - Nature

over time. While this introduces more noise compared to depth-based sensors like Kinect, it offers a more realistic representation of visual input variability encountered in real-world scenarios.

Key takeaways

The most recent updates show a rapid expansion of commercial and industrial humanoid robots. On Nov 27 2025, Humanoid Global Holdings reported that its portfolio company Agility Robotics’ humanoid “Digit” has exceeded 100,000 tote‑movement cycles in live logistics deployments, confirming sustained performance at scale and highlighting the robot’s role in mitigating manufacturing labor shortages. In the same release, 1X Technologies announced the commercial launch of “NEO,” a consumer‑ready home humanoid that folds laundry, organizes shelves, tidies rooms and integrates scheduling assistance via an onboard large‑language model; the robot offers a four‑hour runtime, IP68‑rated hands and multi‑network connectivity (Wi‑Fi, Bluetooth, 5G). Separately, China’s AgiBot A2 set a Guinness World Record by walking 66 miles (106 km) over three days from Nov 10‑13 2025, demonstrating endurance and lip‑reading chat capabilities for customer‑service roles. In Europe, Agile Robots introduced its first industrial humanoid, Agile ONE, featuring five‑fingered dexterous hands, layered AI and a suite of sensors for fine manipulation, and announced the acquisition of thyssenkrupp Automation Engineering to expand U.S. market access. On the AI front, Flexion Robotics secured a $50 million Series A round to develop a reinforcement‑learning, sim‑to‑real platform that can power humanoids across different morphologies and tasks, already partnering with major OEMs. These developments collectively illustrate a surge in both consumer‑focused and factory‑floor humanoid robots, backed by advances in AI, high‑payload capabilities and record‑setting endurance.

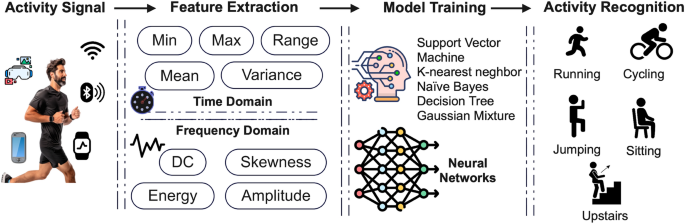

over time. While this introduces more noise compared to depth-based sensors like Kinect, it offers a more realistic representation of visual input variability encountered in real-world scenarios. Concurrently, inertial data is collected via wearable IMUs placed on various body parts, such as the wrists, ankles, and torso, measuring both acceleration and angular velocity at high sampling rates. For our purposes, the teacher model is trained using both modalities, and the student model uses only (2020)."). As these systems are increasingly adopted in sensitive applications such as elderly care or physical rehabilitation, stakeholders must trust and understand the system’s decisions17 in healthcare. Appl. Sci. 13(24), 13009 (2023)."). Current transformer-based HAR models provide little transparency into their inner workings18, 1–28 (2023)."), making them difficult to interpret or debug in practice. (1) ViT-inspired temporal modeling